What the Machine Saw

An algorithm read my memoir and found a different book than the one I wrote.

Subscribe · E-PUB · Kindle/Paperback/Hardcover/Audiobook

From third grade through eighth, I attended the Whitby Montessori School in Greenwich, Connecticut. There I was privileged to experience the most influential teacher of my entire academic training. Mr. Paul Czaja showed us how to use a 35mm camera, develop the film and make prints. He also took us to Times Square in New York City, where we were able to shoot 16 mm black-and-white film and edit it afterwards. He helped us create our own school newspaper, which we handset with real type and printed on a real printing press. He also gave us a unique creative writing class that started the same way every time. Five to ten minutes of meditation. Silence. Eyes closed. Then, when you were ready, you walked to the front of the room where he kept a basket full of laminated photographs — images he’d collected from magazines, calendars, wherever. You chose the one that spoke to you. Took it back to your desk. Contemplated it. And freely wrote whatever came.

No prompts. No thesis statements. No instructions beyond: sit with the image until something opens, then follow it inward. Afterwards, we published our work in a mimeographed literary magazine he named “Caedmon” after the first English poet, dubbed the “Father of English Sacred Song.”

Caedmon’s Hymn (c. 7th century)

Now we must honour the guardian of heaven,

The might of the architect, and his purpose,

The work of the father of glory, as he,

The eternal lord, established the beginning of wonders.

He first created for the children of men

Heaven as a roof, the holy creator.

Then the guardian of mankind,

The eternal lord, afterwards appointed

The middle earth, the lands for men, the Lord almighty.

I have worked that way my entire creative life. Image first. Feeling second. Words last. The process moves from the outside in — a visual lands, something stirs, and you write your way toward whatever it stirred. It’s an inward-facing method, and it produced every page of Surfing the Interstates, the memoir I spent two years writing about hitchhiking four thousand miles across America in 1973.

Fifty three years after that journey, and sixty three years after Mr. Czaja’s classroom, I watched a machine do the exact opposite.

I was checking the final PDF of the second edition in Adobe Acrobat when I noticed a button I’d never seen before. “Create Summary.” So I pressed it, the way you press any button you’ve never pressed, which is to say without thinking.

What came back was strange. Not wrong, exactly. But strange the way a photograph of your house taken from a satellite is strange. You recognize the roof. You can see where the driveway meets the road. But you’d never describe where you live that way.

The algorithm organized my book into twenty-some thematic categories with titles like “Personal Journey and Family Background” and “The Search for Freedom and Self-Expression.” Academic. Bloodless. The kind of language that takes a kid standing on the shoulder of I-80 with his thumb out and turns him into a “narrator reflecting on displacement and inherited trauma.”

Which — fine. He was. But he didn’t know that. He thought he was hitchhiking to California.

That’s the first thing the machine missed. Not the facts but the ignorance. The not-knowing that drives every page of that book. I was twenty-one. I didn’t have themes. I had a backpack, a guitar, some Colombian weed, and a very clear need to be somewhere other than where I was.

Mr. Czaja would have understood. You don’t pick a photograph from the basket because you already know what it means. You pick it because it pulls at something you can’t name yet. The writing is the naming. The algorithm skipped that part entirely. It started with the names.

But here’s what surprised me. The summary did notice real things — it just described them in a language that made them unrecognizable.

It caught the stone and water symbolism — “stones as symbols of clarity, love, and abundance, formed under pressure and holding collective memory.” I’d worked on that thread deliberately, over months, with Claude as my writing partner. We built it together, scene by scene, knowing exactly what we were doing. The algorithm found the thread and described it like a lab report. The same pattern, stripped of everything that made it feel like discovery.

It caught something about the way music functions — not as entertainment or even rebellion, but as what it called “a conduit to transcendence.” That’s not language I would use. But the observation underneath it is accurate. The Grateful Dead shows in that book aren’t concert scenes. They’re the closest thing to church I’d ever experienced. The algorithm saw that without knowing what church means to a kid who never had one.

Where the summary falls apart is exactly where you’d expect a machine to fall apart: in the texture. The felt reality of things. It cycles back to the same observations — trauma, connection, nature, repeat — like someone stirring a pot that’s already been stirred. Here’s a characteristic passage:

“The narrator seeks a love that operates by different physics — patient, deep, and cyclical like tides. The importance of slow, deliberate connection is emphasized as a way to find stability and understanding.”

That’s not wrong. But it could describe a hundred books. It could describe a self-help pamphlet. What it can’t describe is sitting in the passenger seat of a cattle rancher’s truck outside Winnemucca, Nevada, at four in the morning, not saying anything, watching the headlights eat the road, and feeling for the first time in your life that silence between two people doesn’t have to mean something is broken.

That’s what I mean by texture. The machine reads theme. The writer lives moment. The book sits somewhere between the two, or tries to.

The summary also caught Steve — and got him wrong. The algorithm called him a Vietnam vet whose arc illustrates “how war and systemic violence shape individual destinies.” Steve never went to Vietnam. A school psychiatrist gave him a psychological 4F that kept him out. His paranoia didn’t come from combat. It came from somewhere inside him that no draft board or algorithm could have mapped, and it ended with him being killed by police decades later — a death I compressed into the narrative because the truth of who he was demanded that the reader not be let off the hook.

The machine identified Steve’s importance to the book. Then it invented a biography for him that sounded right but wasn’t. It needed a cause for his damage, so it supplied the most obvious one. That’s exactly what a machine does. And it’s exactly what a writer can’t afford to do.

I spent two months trying to put Steve back together as a person. The algorithm spent a fraction of a second turning him into a category.

This is the fundamental tension of any summary, human or machine — the gap between pattern and presence. Patterns are real. They matter. But presence is what makes you turn the page.

There’s something the summary gets right by accident, though. In its relentless circling — trauma, nature, connection, music, trauma, nature, connection — it inadvertently mimics the actual structure of the journey. Because hitchhiking is circular. You’re not going somewhere in any meaningful narrative sense. You’re going, and then you’re somewhere, and then you’re going again. The people change. The landscape changes. You don’t change, or you do, but so slowly you can’t see it, the way you can’t see your own face aging in the mirror every morning.

The algorithm’s repetitiveness frustrated me at first. Then I realized it was doing what the road does. Coming back to the same things, slightly different each time. That’s either a defense of the algorithm or an indictment of it. I’m not sure which.

What fascinates me most is what the machine emphasized and what it ignored.

It gave significant space to the cosmic bus, the UFO sequences, the peyote visions in the redwoods. Algorithmically, I understand why. Those scenes are linguistically dense. They generate the most “meaning” per paragraph. They light up whatever circuits a language model uses to identify significance.

But the book’s emotional center — the scenes that readers have told me stopped them cold — are quieter than that. A kitchen with no smell of Sunday roast. A grandmother’s tinsel, one strand at a time. Lucy humming a frequency in a mountain camp that made something inside you stop clenching. The machine sailed right past those moments because they don’t announce themselves as important. They don’t have thesis statements.

In my experience, the things that matter most rarely do.

I asked another AI — the one I actually write with — to generate an image for this essay. What came back was a painting split diagonally down the middle. On one side, a young hitchhiker at dawn, thumb out, guitar at his feet, the sky blazing amber and copper in the style of Turner. On the other side, the same scene rendered as data — cool blue, columns of text, the flat glow of a terminal screen.

Two halves. Same road running through both.

I stared at it for a while, and I thought about Mr. Czaja’s basket. The laminated photographs. How the whole point was that you didn’t analyze the image. You let it analyze you. You sat with it until it opened something, and then you followed that opening wherever it led.

The algorithm did the opposite. It took a finished book — a book that began as feeling and image and the ache of memory — and read it backward, from conclusion to cause, from pattern to data point. It’s an impressive trick. But it’s the reverse of how the thing was made.

I’m sharing this not as a complaint about artificial intelligence. I’ve been working with AI for two years now — building this memoir in conversation with Claude, using it as a thinking partner, an editor, a sounding board. That collaboration produced something I couldn’t have made alone. I know what the technology is capable of as well as its weaknesses and traps. I’m grateful for it and use it as a tool, not a replacement for my interior life and process.

But there’s a difference between an AI that works with you over months, absorbing your voice and your history and your particular way of seeing, and an algorithm that scans a finished document and sorts it into buckets. The first is collaboration. The second is triage.

The Adobe summary triaged my book. It identified the major injuries, catalogued the symptoms, and wrote up a report. What it couldn’t do was tell you what it felt like to be the patient. That part is still the writer’s job, and always will be.

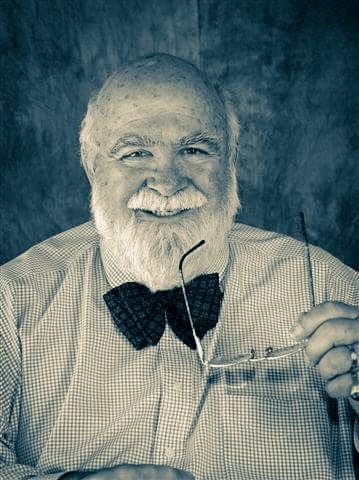

I turned seventy-four last week. I wrote Surfing the Interstates not to explain what happened to me in 1973 but to find out. The writing was the discovery. Every chapter peeled back something I hadn’t understood about myself, about my family, about the particular way damage gets handed down and, if you’re lucky, set down.

The machine read the book and saw patterns of trauma and transcendence. I wrote the book and found something simpler than that. I found out that a twenty-one-year-old kid with his thumb out on a hot July afternoon in Armonk, New York was braver than the seventy-year-old man who finally sat down to tell the story. And that the half century between them were the real distance I had to cross.

No algorithm is going to summarize that. But I’m glad one tried.

Mr. Czaja would have understood that, too. Sometimes you pick the image from the basket and it doesn’t give you what you expected. Sometimes what it gives you is better. You just have to sit with it long enough to find out.

Surfing the Interstates: A 1973 Hitchhiking Memoir

Subscribe · E-PUB · Kindle/Paperback/Hardcover/Audiobook